Hello I'm

Sawera Yaseen

I am an Erasmus Mundus Scholar in Intelligent Field Robotic Systems (IFRoS). This joint master’s program is offered by Universitat de Girona, Spain, and the University of Zagreb, Croatia. Currently, I am working on my Master’s thesis at the Smart Mechatronics and Robotics (SMART) research group at Saxion University of Applied Sciences. My research focuses on the detection of invisible obstacles for drone obstacle avoidance. I am passionate about robot perception and autonomous navigation, with a strong interest in applying robotics and AI to solve real-world challenges. My goal is to contribute to cutting-edge advancements in robotics, particularly in intelligent autonomous systems.

Education

2023 – Present

Erasmus Mundus Joint Master's in Intelligent Field Robotic Systems

- Semester 1 & 2 – University of Girona: Autonomous Systems, Multiview Geometry, Machine Learning, Probabilistic Robotics, Robot Manipulation, Hands-on Planning, Hands-on Perception, Hands-on Localization, Hands-on Intervention

- Semester 3 – University of Zagreb (Specialization in Aerial Robotics and Multi-Robot Systems): Aerial Robotics, Multi-Robot Systems, Deep Learning, Robotic Sensing Perception and Actuation, Human-Robot Interaction, Ethics and Technology

2018 – 2022

Bachelor's in Mechatronics Engineering (CGPA: 3.95/4.00)

Mehran University of Engineering and Technology, Jamshoro, Pakistan

Thesis: Deep Learning Based Smart Spraying System for Disease Detection of Vegetables

Projects

Controlling a Swarm of Crazyflies using Reynolds Rules and Consensus Protocol

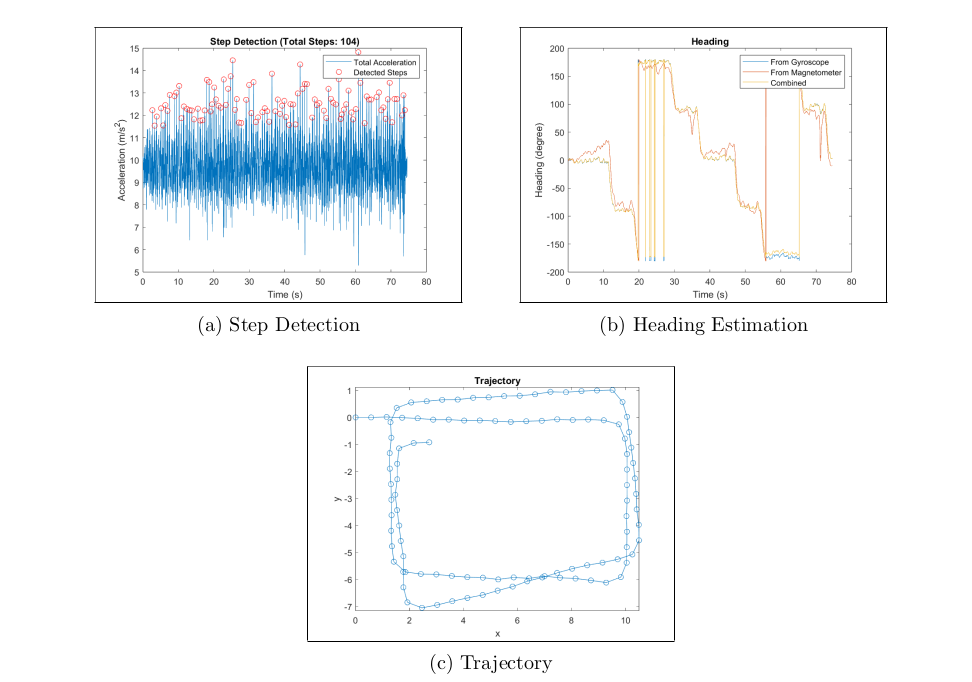

Pedestrian Dead Reckoning (PDR)

Human Detection and Tracking

Stereo Visual Odometry Using the KITTI Dataset

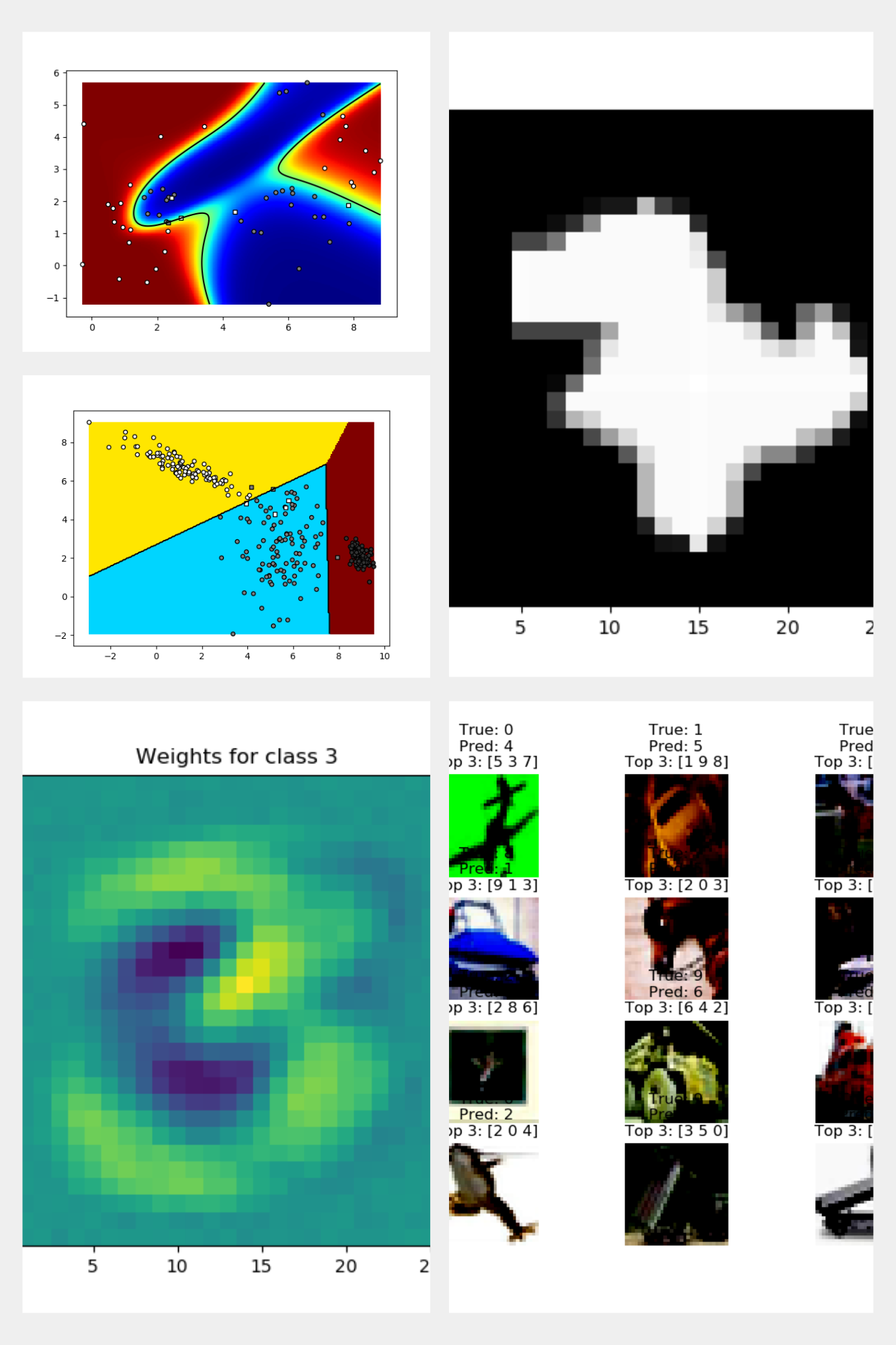

Deep Learning Lab Work

Aerial Robotics Lab Work

Frontier Based Exploration

EKF based SLAM using ArUco Markers

Kinematic Control System for a Mobile Manipulator

Real-Time 2D Pose Estimation for Automated Parts-Picking

TurtleBot Online Path Planning

Autonomous Object Handling with Behavior Trees in TurtleBot

Task-Priority Kinematic Control

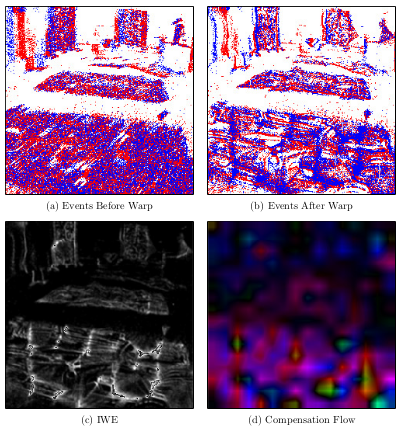

Event-based Cameras

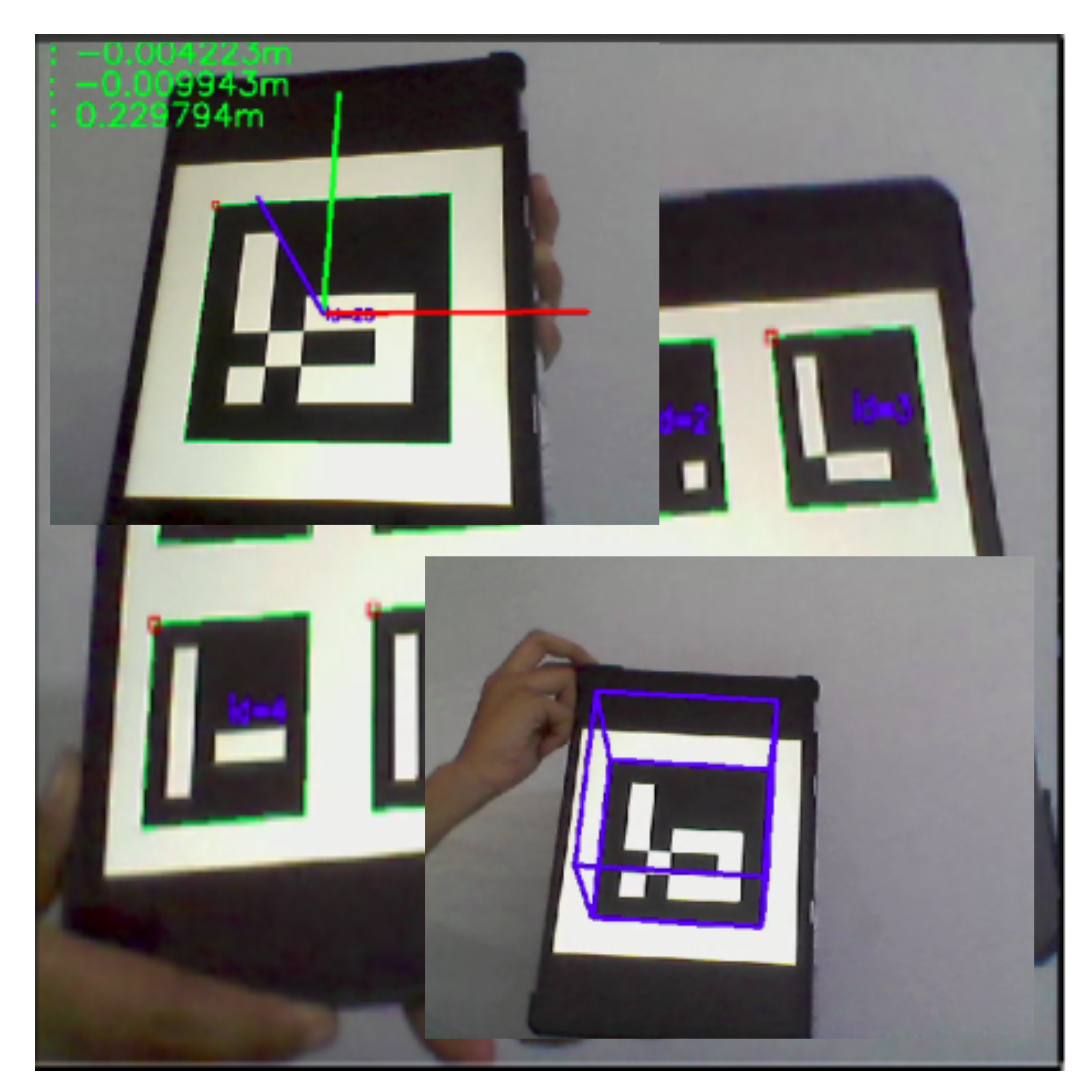

Computer-vision applications using ArUco marker

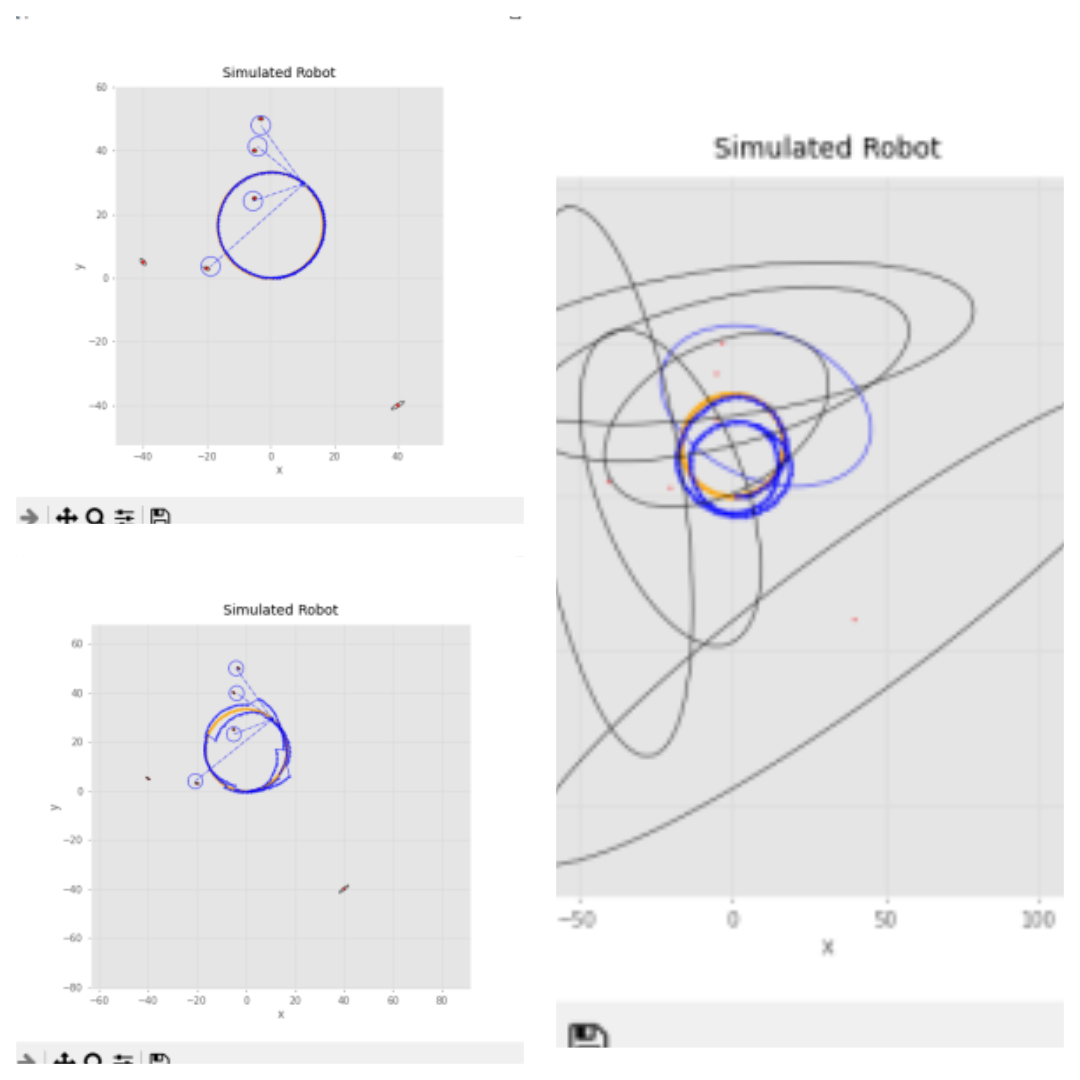

Extended Kalman Filter (FEKF) for SLAM

Palletizing operation with UR3e Collaborative Robot

Classification and Pick-and-Place with the Staubli TS-60

Assembly and Pick-and-Place with the Staubli TX-60

Reinforcement Learning-Based Path Planning

Deep Learning Based Smart Spraying System for Disease Control of Vegetables (Bachelor's Final Year Project)